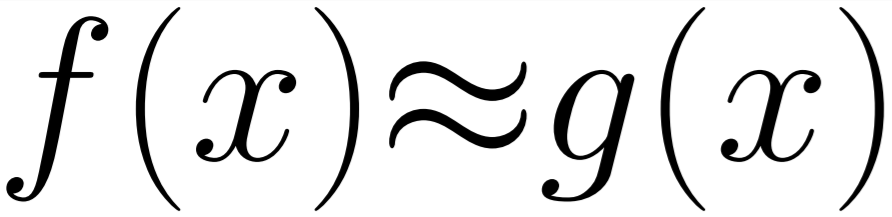

This is a follow on from the last post where we discussed improving neural network performance by allowing for cheaper approximate functions to be used instead of the costly full-fat activation functions.

In Machine Learning an activation function is used to decide which neurons should be activated. It is a transform of the signal from layer to the next. To be able to fit to complex functions an activation layer must be non-linear otherwise the network is only capable of simple linear regression.

A list of common activation functions can be found on the wikipedia page which also gives the properties and limitations of each.

As you can see in that table, there is a lot and they seem to be very strictly defined. If we were to stick with ‘tanh’ as we looked at in the last post we could see it being implemented with more or less error. We were interested in figuring out if an implementation of ‘tanh’ which allowed an enormous amount of error, but was still non-linear, would be unable to perform the same task as the standard implementation of the ‘tanh’ function.

So we came up with two alternatives. ‘ApproxTanh3’ an order 3 polynomial approximation and ‘ApproxTanh12’ an order 12 polynomial approximation. The plot of these against the standard implementation can be seen below:

As you can see, the high order approximation is a reasonable fit to the actual tanh implementation where as the low order approximation basically falls off a cliff around x=0.5 .

So, what happens when we use this in a real neural network?

For this task we have chosen the standard MNIST handwriting dataset and a pretty simple convolutional neural network.

The dataset is a very common one, it is a collection of 28x28 pixel images of handwritten numbers and the number they represent. The standard test error rate of a convolutional neural network on this dataset is between ~0.2% and ~1.7% for different standard implementations.

And our neural network looks like this where {CHOSEN ACTIVATION} is either one of our approximations or the real ‘tanh’ implementation:

Conv2D(32, (5, 5), input_shape=(1, 28, 28), activation='relu') MaxPooling2D(pool_size=(2, 2)) Dropout(0.2) Flatten() Dense(128, activation = {CHOSEN ACTIVATION} ) Dense(num_classes, activation='softmax')

With this network with the same seed we get these results:

| Function | TEST ERROR RATE (%) |

|---|---|

| Keras.Tanh | 0.97 |

| ApproxTanh3 | 0.84 |

| ApproxTanh12 | 1.31 |

This was very surprising to us. We expected the terrible order 3 approximation to break the ability for the neural network to fit the problem, but it’s results were within the ranges we expected and beat the standard implementations error rate in this specific seed (Other runs show some variance of +/- one percent).

In this example, our terrible activation function was able to match the standard implementation. It seems for certain problems where the inputs to the activation stay within certain ranges that the exact shape of the activation function isn’t important as long as it is vaguely correct within the working range.

This means that there is a large range of error tolerance in this system. A fact which it seems, for some networks, could be exploited to reduce learning times!