In our research we are currently investigating existing applications which are tolerant to functions which return a value that is within a tolerance range rather than relying on absolute unit-in-last-place guarantees.

Machine-learning is a popular area of computing at the moment which just happens to conform to this paradigm. From specialised GPUs to TPUs we can see large teams approaching machine learning with variable precision stages and noise inducing layers to speed up or aid in the learning process.

While there is a lot of areas here which show levels of error tolerance, we have chosen to focus on the activation functions. In particular we are looking at the popular ‘tanh’ activation.

The function ‘tanh’ is a useful function due to its non-linearity and convenient output mapping of [-1,1]. Unfortunately, Tanh is an expensive function to compute accurately.

So, if we want to improve this we need to begin with the facts we know already:

Some activation layers in a neural networks can be expensive

Machine learning algorithms often use low-precision floats (16/32-bit)

Floating-point cannot give absolutely correct answers for real numbers

Inputs to some learning algorithms are often normalised to be values between zero and one

Accuracy between zero and one in floating-point is non-linear. So error is higher in functions working closer to one

Approximations of complex functions can result in better performance

Machine learning is inherently error-tolerant

Machine learning has a major problem with run-times being too long

From these facts we can assume:

The current ‘tanh’ function implementation has an acceptable and variable level of error

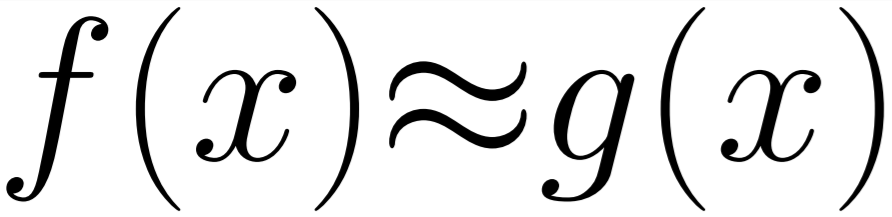

An approximation of ‘tanh’ which has better performance and acceptable levels of error will not impact on the overall outcome of the learning process and be valuable to the programmer

Any implementation which does not impact the overall result negatively and improves run-time would be a positive contribution.

So, if we want to show our assumptions apply to the real-world, we need to prove each one.

1) This is the simplest assumption to prove. The Tensorflow website provides a few machine learning tutorials, one of which is on Text Classification. This example uses a sigmoid activation in it’s final step. Changing this to a ‘tanh’ activation has no negative impact on the learning process. The accuracy of the output model remains in the 80-90% accuracy range.

Since we are using ‘tanh’ and the output is acceptable, the error that we know it has due to its underlying type must be valid and hasn’t impacted the convergence of the learning process.

2) Next we want to take an approximation and compare the result of using that to the official implementation to see if it has any difference characteristics which might be detrimental.

To do this we must select an implementation for the replacement. We have kept to a simple polynomial for this example as the resulting error is very small on the limited input range we are using ([0,1]). Although there are other, better performing, approximations with different costs associated.

With our replacement Activation function selected, we simple replace the final activation layer to point to our function and can then run the learning process. To ensure fairness between all implementations we use a fixed seed and reset the global state of Tensorflow for each run. This will allow us to see the ways in which the implementations may diverge through the learning process.

The tests give us the above results. The first chart is the standard implementation, while the other three are order-16 polynomials implementations in 64, 32 and 16-byte floating-point. As can be seen, there is very little difference between the approximations. The approximations do diverge from the official implementation around epochs 15-20. This divergence is expected due to the accumulation of small differences between the implementation but has no impact on the overall accuracy of the resulting model. In all tests that were ran the approximations and official implementation all converged to around the same accuracy.

This shows that the approximations do not negatively impact the overall result of the model.

NOTE: These tests were all trained on a CPU due to the NVIDIA libraries being non-deterministic. This non-determinism prevented 1-to-1 comparison of different activation functions with the same seed.

3) Next we need to consider the performance impact. As we are limited to working with a very small model due to problems with NVIDIA’s implementation preventing 1-to-1 testing on the GPU the activation layer is only a small part of a small model and this makes it difficult to accurately measure through the noise. To get around this we will be testing the implementations on the CPU separately (until we do further work with more time!).

Run on (8 X 3600 MHz CPU s)

CPU Caches: L1 Data 32K (x4), L1 Instruction 32K (x4),

L2 Unified 262K (x4), L3 Unified 8388K (x1)

------------------------------------------------------

Benchmark Time

------------------------------------------------------

Tanh Standard Implementation | 212 ms

|

--Order 16 Polynomial Implementations-- |

SSE 64bit Float | 114 ms

Nonvectorised 64bit Float | 144 ms

SSE 32bit Float | 114 ms

Nonvectorised 32bit Float | 129 ms

Different machine learning libraries will take different approaches to vectorisation. As our functions are trivially vectorised on the CPU we see huge improvements over the standard ‘tanh’ performance, but we also see large improvements even in the standard scalar implementations.

A win of 25-50% on performance is significant when running a network with hours spent training, even if only a portion of that is spent with the activation functions.

Conclusion

With these three assumptions shown to be true in limited practice we are in a good position to make stronger assertions for larger neural networks and hopefully present a method to improve the performance of your Tensorflow models without having to make any tangible sacrifice!