We are all familiar with compilers being helpful by taking duplicate calls to side-effect free functions and changing them to only be called once to save on wasted computation. Effectively creating a local cache of answers in a scope. For example:

void someFunction(int a, int b) { int c = Add(a,b); int d = Add(a,b); int e = Add(a,b); } Can Become.... void someFunction(int a, int b) { int f = Add(a,b); int c = f; int d = f; int e = f; }

This rearranging effectively saves on two calls to 'Add' and in long functions where there is multiple uses the new 'f' variable is a little cache to reference back to.

In a real-time scenario we might manually code a wrapper around some of the functions to store the results many calls to a complex function for some inputs. This would allow us to store the results of the function call cache in a global scope. So that a call to 'Add(1,1)' which had already been computed earlier could be returned from memory. This is very useful in the real world for things like journey planning where the directions from one place in a country to another may take a long time to compute so they might be stored if they are frequently requested to save on resources expensively calculating a result which shouldn't change.

Taking this example of journey planning, we may have a long journey from 'Edinburgh Castle' to '21B Baker Street, London' which took a long time on our system to accurately calculate and give to our user. Now, if another user want the directions from 'Edinburgh Castle' to '22B Baker Street, London' which is just next door to the end point of the last journey we calculated then we are given two choices, a) we can spent our time and resources calculating a new path or b) we can give them the directions to 21B Baker Street and trust the user that they will know to read the house numbers and will find the right place. In this example we are treating the end-user as an element in our system that is resilient to errors of up-to one house. If we were to be robust we would take the directions for 21B Baker Street and then append the much smaller and easier computed directions of how to find the house next door, but we will consider this unnecessary for the current use case.

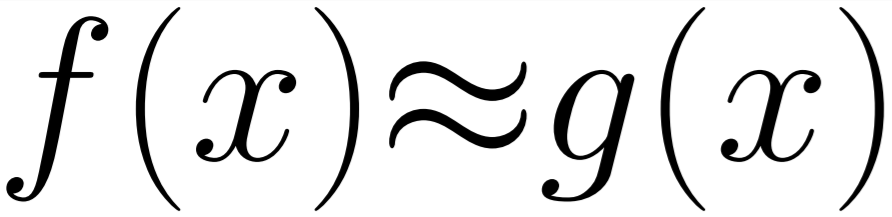

This kind of optimisation, using a function call cache with some approximation, can be applied to many types of functions and algorithms for a limited overhead and for expensive solutions will produce huge wins but only when the next stage of the process is resilient to level of error it is given. This type of optimisation wouldn't work if the range of error in the inputs that is allowed is too great.

So, what if I wanted to apply this generically to any function in my program? Well, thats where we can get a bit silly with templates and create a function which looks a bit like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 | template<typename T> bool CheckValid(const T& inputValue, const T& referenceValue, const double& percentage) { const double min = (double)(referenceValue)-(double)(referenceValue*(1.0 - percentage)); const double max = (double)(referenceValue)+(double)(referenceValue*(1.0 - percentage)); if (inputValue < min || inputValue > max) { return false; } return true; } template<typename T, typename ret, typename argV> const argV MemberVariablePointer(const char* voidFi, const int& _index, const T& _offsets) { const char* dataPointer = voidFi + _offsets[_index];// +sizeof(ret); const argV* d = (const argV*)dataPointer; return *d; } //Memoise Function #define CACHE_SIZE 10 template<typename Fn, Fn func, int percentageErrorAllowed, typename... Args> typename std::result_of<Fn(Args...)>::type MemoiseWrapperFunction(Args&&... args) { ////Approximate Information static std::array< FunctionInformation<std::result_of<Fn(Args...)>::type, Args...>, CACHE_SIZE > globalFunctionResultCache; static int currentIndex = 0; constexpr double percentageError = double(percentageErrorAllowed) / (100.f); constexpr unsigned int inputVariableCount = sizeof...(Args); constexpr auto argOffsets = GetTypesOffsets<Args...>(); //Check if there is a stored close enough value for (int ii = 0; ii < CACHE_SIZE; ii++) { const char* voidFi = (const char*)&globalFunctionResultCache[ii]; bool inRange = true; int variableIndex = 0; const bool results[] = { (CheckValid( args, MemberVariablePointer < decltype(argOffsets), std::result_of<Fn(Args...)>::type, std::remove_reference<decltype(args)>::type > (voidFi, variableIndex++, argOffsets) , percentageError))... }; for (const bool res : results) { if (!res) { inRange = false; break; } } if (inRange) { //We have a match in the cache. return globalFunctionResultCache[ii]._returnValue; } } //No match found. //Do work and store cache result const int writeindex = currentIndex; globalFunctionResultCache[writeindex]._returnValue = func(std::forward<Args>(args)...); globalFunctionResultCache[writeindex].Set(std::forward<Args>(args)...); //Setup next cache index currentIndex++; currentIndex = currentIndex % CACHE_SIZE; //Return results return globalFunctionResultCache[writeindex]._returnValue; } #define MemoWrapper(FUNC, ERRORPERC) MemoiseWrapperFunction<decltype(&FUNC), &FUNC, ERRORPERC> |

WAIT! Don't run! It isn't as terrible as it looks!

Lines 7-11 contain the static and constexpr values needed to store the results of the function and calculate if new inputs are within range of any in the cache.

The cache is implemented as a fixed size array. (Note: in a more robust implementation you can remove a lot of overhead and value checking with a hashing function that stores results nearby based on input values - but that is a little complex for this example).

The following loop simply checks the cache for the input variables that could be near enough to allow it to return the pre-computed answer. This is done mostly on line 40, which builds and array with entries for each input value which says whether it is valid or not. This is done through the expansion of the variadic input, making it a little difficult to read due to that feature of C++ being a little troublesome to write nicely. This can be tidied into a simpler input if we change the cache-system but it is robust and clear enough for this demo.

After the loop is simply running the function (line 63) and storing the result in the next cache spot. (Note: An ideal implementation wouldn't evict the next cache spot for its result, but replace the "coolest" spot so that the most frequently referenced stay in the cache)

The templates for the MemoiseWrapperFunction allow us to pass in a target function and the percentage accuracy we want. This means we call the wrapped function like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 | int add(int _a, int _b) { return _a + _b; } void ApproxCacheTest() { int ff0 = MemoWrapper(add, 80)(5, 5); // returns 10 int ff1 = MemoWrapper(add, 80)(2, 1); // returns 3 int ff5 = MemoWrapper(add, 80)(5, 33); // returns 38 int ff6 = MemoWrapper(add, 80)(5, 6); // returns 10 because of ff0 being - // in the cache and within the approximate range (80%). } |

(Note: This calling convention could be changed to be like our overrides in our generated approximate examples from last month, but this is fine for a simple working prototype)

As you can see in the example code above, the result of ff6 is given the result from ff0 because each input in (5,6) is within 80% of the range of (5,5).

This example uses a simple addition function which is obviously simpler than the overhead of checking the cache and comparing values. In a real-world system you would want to replace that with a very complex and expensive function like the journey planning example.

This is a very complex implementation of a quite simple idea because of being made into a generic solution. For a lot of problems, a hand-crafted solution might have a lower overhead.

When using this system, the size and behaviour of the cache will play a large part in whether it is effective at reducing complexity on very large scale systems due to the additional overhead costs which need to be justified by a certain percentage of cache hits to match the additional costs.

I will follow this post up with a more detailed and tidy example implementing the ideas I have dotted around this implementation.

Thanks for reading!