As I have written about before, we all know that our floating point calculations contain error from accumulated rounding error and that error can distribute unevenly across the range of inputs for that function based on the distribution of results in each calculation.

Checking this error at run-time would involve a lot of overhead but having a method to analyse the function before the program is released is useful for detecting any edge cases and getting an idea of the minimum and maximum error to help you calculate a correct epsilon for handling errors. (Ask me about epsilons in physics libraries to get my full rant on this...)

Luckily, this kind of function checking is very simple. We simply need to create a replacement 'float' type which mimics all floating point calculations at the highest precision and stores this error in the type. This type can then combine it's own error with others when used together to get an understanding of the cumulative offset.

A class which replaces a standard type like this will need to have the correct constructors and operator overrides to cover all the use cases so that we don't get compile errors or type mismatches or even worse - blindly building a new instance and losing our cumulative error count.

The main elements of this class are the 'float' value it is wrapping and a high precision error counter. We can't use float for this error counter as it will be vulnerable to the same level of rounding error as the float that it is wrapping.

(Optionally: I added a string to keep the name of the float to make debugging and checking that we are counting the error correctly easier)

With that in mind, you should end up with a class that looks something like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 | class FloatError { public: FloatError(float _x) { static int floatIDCounter = 0; m_name = "FloatID_"; m_name.append(std::to_string(floatIDCounter)); m_value = _x; m_error = 0.0; } /* other constructors go here... */ //Operators overload FloatError operator+(const FloatError& _in) { float val = m_value + _in.m_value; long double highResResult = ((long double)m_value + (long double)_in.m_value); long double error = abs((highResResult - (long double)val )); m_error += error + _in.m_error; return FloatError(val, m_error); } /* Others go here... */ std::string m_name; float m_value; long double m_error; }; |

This implementation of the operator+ override is the important part here. Each operation is repeated while cast to the higher precision type. The difference of these two results is then computed to give us a high level approximation of the rounding error that has been incurred. This result is then combined with the errors of the two numbers being summed to give us the total error in the final result.

It would be tempting in this implementation to not take the absolute value of the error to store the total offset from the correct answer rather than allowing errors to cancel each other out by taking the negative and positive errors. However, that would only be correct for measuring when values are added or subtracted. When dealing with all operations we would have to handle how the offset value of the multiply or division would increase error with the number it is being applied to - which is a more complex problem than we are addressing here. In this instance we are only looking at analysing the total rounding error - for now.

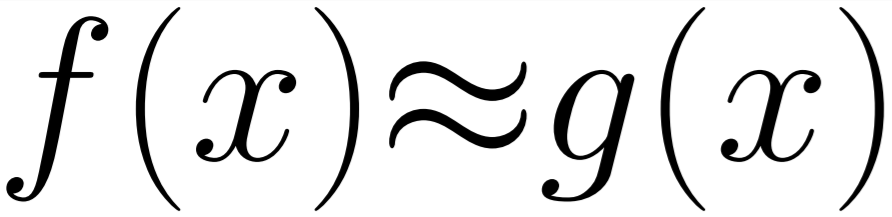

With this all in place we want to be able to use this class on a function taking floats. The example we will use will be a function which takes a fixed array of floating point numbers and add them all together returning the sum value.

1 2 3 4 5 6 7 8 9 10 11 | #define float FloatError float Sum(std::array<float, 200>& _in) { float err(0.0); for (auto i : _in) { err = err + i; } return err; } #define float float |

In the code example you will see that we have wrapped the function in a define to override the float type and replace it with our FloatError type. We can then call this function from our test function which generates arrays of random values in a fixed range and then tests the Sum function with those values to try and find a maximum and minimum error in that range. (Note: for any array of random values in this example there should exist values that produce zero error.)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 | void TestFloatError(const float _min = 0.f, const float _max = 1000.f, const int _sampleCount = 50) { std::array<FloatError, 200> numbers; float lo = _min; float hi = _max; long double minerr = 1000000.f; long double maxerr = 0.f; for (int j = 0; j < _sampleCount; j++) { for (int i = 0; i < 200; i++) { numbers[i] = getRandom(lo, hi); } FloatError errRes = Sum(numbers); std::cout << "ERROR: " << errRes.m_error << ".\n"; minerr = std::min(minerr, errRes.m_error); maxerr = std::max(maxerr, errRes.m_error); } std::cout << "MIN ERROR: " << minerr << ".\n"; std::cout << "MAX ERROR: " << maxerr << ".\n"; } |

Once it has tested the function multiple times it will output and min and max error while also outputting the error for each sample as it runs. Which in the end looks like this for the range (0,1000.f):

ERROR: 0.219635. ERROR: 0.202362. ERROR: 0.211487. ERROR: 0.231384. ERROR: 0.206512. ... Many results ... ERROR: 0.218292. ERROR: 0.212372. ERROR: 0.235107. ERROR: 0.181488. ERROR: 0.217163. ERROR: 0.231659. ERROR: 0.227386. ERROR: 0.22171. ERROR: 0.2005. ERROR: 0.221191. ERROR: 0.23172. ERROR: 0.243927. ERROR: 0.213196. ERROR: 0.214783. ERROR: 0.219055. ERROR: 0.229004. MIN ERROR: 0.181488. MAX ERROR: 0.243927.

So we can see that the total error that is possible in even this small sample is not insignificant - and that is only with additions of float values which already sit on floating point steps.

I hope this was useful to you and that you can apply it in your own code to help visualise the error that is hidden in even your simplest algorithms!