As I begin the run up to the work that will make up my final thesis I wanted to be able to put down the theory we are following and how we plan on evaluating it.

We propose that with Moore’s Law coming to an end and the “Free Lunch” no longer being possible as we saturate Amdahl’s Law with multiple processor systems developers will have to look beyond standard scalability optimisation and accurate program transforms to allow the best performance in the software that they write.

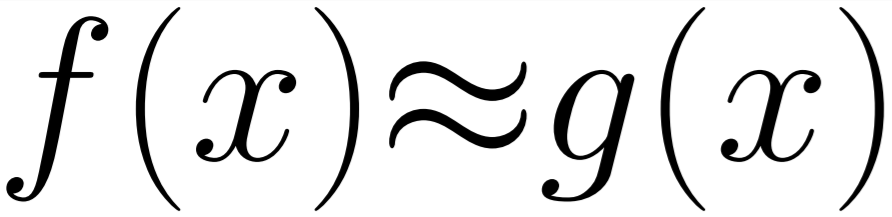

As a result they will need to use appropriate approximation to write code that will perform only the computations needed to reach the desired result. As computing currently stands we frequently compute answers to the highest possible precision, even when that isn’t necessary. This is the standard behaviour of most shared libraries (as we have spoken about on this blog before) and leads to a significant waste of energy worldwide.

At a small scale this kind of optimisation is already taking place for specialised use-cases - such as limited hardware or the games industry. However, scaling the solution to be part of the standard software engineering pipeline is more difficult. Currently hand-crafting solutions to these problems can take many hours of an engineers time and validating the solution, especially when a code base is changing, is very challenging.

To be able to automate this process we need to be able to change the whole hardware agnostic software development pipeline.

We want programmers to be able to specify a function with an output type but also a desired accuracy and for each input to the function a valid input range. These heuristics can then be evaluated by the compiler and used to automatically produce save transforms which will improve performance.

With this system a library could be provided semi-compiled and when a function from that library is used it can be marked up appropriately so that it can be correctly compiled into existing programs optimally and avoid wasted computing.

This does mean that linking to existing dynamic libraries would produce a boundary through which approximations could not be assumed and as such would perform worse than full or partial source compilations.

With this setup it would be possible to compile approximately generically and allow specific optimisations for specified hardware. This would allow for us to tackle global data centre energy waste by reducing the overall power needed to compute programs.

Nick.