In 1952, two years after his involvement in the invention of the first stored program computer, David Wheeler proposed the idea of the subroutine. A simple idea which is now ubiquitous throughout computing.

David Wheeler, first person ever to earn a PhD in Computer Science. The 50’s were unprepared for his collars.

The introduction of subroutines was proposed in ACM 52 as “a self-contained part of a programme, which is capable of being used in different programmes. It is an entity of its own within a programme”. In his paper he defines functions as well as the concept of libraries of functions.

These concepts define the fundamental pattern of modern software engineering. It has become common place to emphasise the phrase “Don’t reinvent the wheel” to new programmers to teach that it is bad practice to write code for anything that already exists and works unless they have a very good justification. It is much more common for people to use code from a popular or tested library for any problem which is not highly specific to the task at hand.

In his paper Wheeler outlines the standard specification of a library: well documented, highly specified and appropriately accessible. Although he refers to problems related to punch cards and paper tape, the concepts he introduced have remained surprisingly unchanged. He even went as far as to point out that documentation for the library may be the hardest part - a highly prescient statement for the state of libraries sixty years later.

Before we get ahead of ourselves – this is not an article in praise of David Wheeler’s subroutines, quite the opposite. While this contribution has been the basis of so much of our software engineering design principles and undoubtably we would not have come so far in computer software without such a pivotal idea being proposed. This is in fact an article to outline the problems of this exact approach and how in the age of widespread computing the simple principle of library-based subroutines may be more akin to a dark pattern.

Subroutines were proposed for use on Electronic delay storage automatic calculator (EDSAC)

This drastic attack on the major contribution of a famous computer scientist (renowned hero of my department no less!) can thankfully be explained and defended with his own words: “All problems in computer science can be solved by another level of indirection”. In this quote he is expressing the common idea in computer science that adding abstractions to computing problems often makes them easier to solve, understand or integrate into existing solutions.

The concept of abstraction can be demonstrated by looking at programming languages which are themselves an abstraction built between the programmer and the lowest level of computer instructions. Without modern programming languages it would be practically impossible to maintain and test the large code-bases and complexity of systems we now require as part of managing the modern world.

What is unsaid in the quote is that abstraction is not free. Each level of indirection comes at a cost. We could expand on the quote by saying “Each level of indirection required to solve the problem easily, increases the price of solving the problem above its true cost”.

We can return to our programming language example to show how this becomes true. In the old days of Wheeler working on EDSAC the instructions would have been written specifically for the machine that he was working on. His library would have consisted of subroutines written with his exact hardware and use-cases in mind. When we add the indirection of a programming language, we have added a barrier between the hardware and the programmer. We can no longer guarantee that the instructions the user expects to emerge after the compilation process of their language will in fact be the ones they expect. This is definitely the case when we consider modern design processes which target multiple hardware configurations from a single programming language abstraction. We have in effect removed the guarantee of optimal code output in exchange for the ability to solve the problem of complex programming or multiple hardware targets.

Once again Wheeler can defend me in my attack on our use of subroutines. In his paper on subroutines he gives two examples of what would make good subroutines but appends the suggestions with a question about how they should be implemented. He raises the problem of: Given a library which contains abstractions of problems we want to solve, how should those abstractions be implemented? No one solution will result in a library that would be optimal for all use-cases and so we would incur overhead cost for some uses.

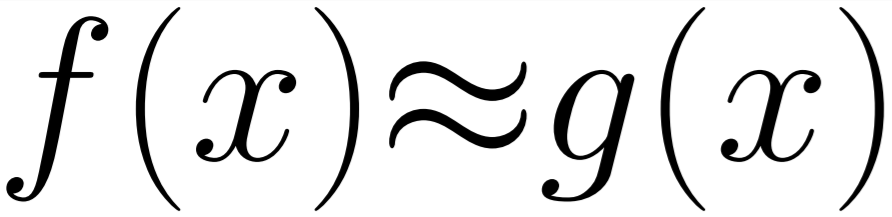

It is my belief that the current approach of implementing very rigid libraries has resulted in high costs of abstraction. Let’s take one of the example functions in Wheeler’s paper, sine(x). A reasonably complex function on which many papers have been written with aims of optimising the implementation to give minimal error. Most programming languages with a standard mathematical library will contain an implementation of sine. But, which implementation will it contain? Will it be as accurate as possible at the given precision? Is that what is required by your program? Is the cost of reaching that accuracy linear? Would you get a result that is only slightly less accurate for much less computation? These are all valid questions for someone who wants to write a program that seeks to run optimally, you could say this is a financially important (or environmentally important, depending on how you see the world) question if this is a program that is going to take up hours of processing time over multiple datacentres.

This is where subroutines as proposed by Wheeler and adopted by the whole tech world have failed us. There will be one implementation and very little choice in configuring the result without “rolling your own” and that is considered bad practice and dangerous (think of the bugs!). So, we have a generation of programmers highly reliant on prewritten solutions which are not ideal for their use-case, often with very little information to determine if they are actually what they want or measure the impact they have on the final result. They are paying a huge cost for abstraction and it is likely they don’t realise it as this is how we have taught them to program.

While this may have only been a minor inconvenience in the past, this problem may be turning into a disaster much faster than anyone might realise. We have a group of problems which are making this a major dilemma: the end of the free lunch (Moore and Amdahl), cloud computing increasing global access to large scale computing, huge increase in hardware abstractions and increased pressure for highly performant software!

My research is into optimisation for large scale computing where I am working on approaches to library development and function generation to try and increase the performance of applications without sacrificing the accuracy of the output. The majority of this work is through the removal of unneeded abstractions and correct measurement of what is truly needed for a problem. Without this work and the work of others in related fields, computing will hit a performance wall when we can no longer make chips any smaller or more parallel. As a result to get the actual optimal performance of output from the hardware we have I believe we will need to have a paradigm shift into new ways of thinking of abstractions and see Wheelers definitions as the basis for more complex but efficient library systems rather than using it as the unscalable blueprint it is.