This post is focused on the representation of real numbers in our application. Here we are looking at how they are represented as a finite number of bits and what considerations we must make in our application to be able to correctly predict the behavior to use them effectively.

Real Number Representation

As you probably already know, if you are reading this, types such as float and double are represented. MDSN gives a very concise description of how they are represented on their page about float type:

“Floating-point numbers use the IEEE (Institute of Electrical and Electronics Engineers) format. Single-precision values with float type have 4 bytes, consisting of a sign bit, an 8-bit excess-127 binary exponent, and a 23-bit mantissa. The mantissa represents a number between 1.0 and 2.0. Since the high-order bit of the mantissa is always 1, it is not stored in the number. This representation gives a range of approximately 3.4E–38 to 3.4E+38 for type float.”

The IEEE format they are talking about is IEEE 754 which covers in much greater detail the rules and behavior of float types but is a pretty heavy read (trust me, it's OK to just read the plot synopsis on this book).

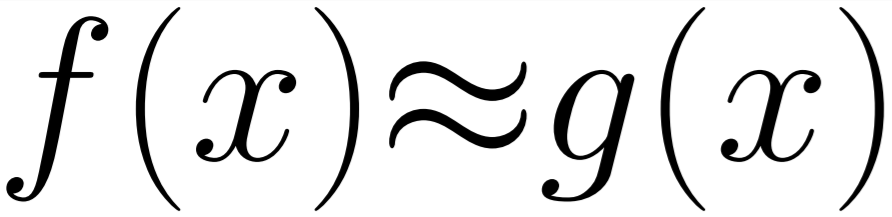

The MSDN page misses the equation to calculate a number from the representation it describes. That equation is:

With this representation we can see how numbers are encoded like this.

With this binary view in mind it is very visible that there is only a finite number of bits to switch and due to the nature of the exponential some of those switches will have varying degrees of change based on how large the number currently is.

This leads us to the main point of this article...

Unit in Last Place

So when we are thinking about representing numbers and errors that creep into calculations we have to consider what the size of the distance between the current number being represented and the next number being represented is. In single-precision floating point (shown above) it is capable of being accurate to very very small fractions of numbers in the range 0-1 but then becomes increasing less accurate as the size of the number increases due to the lack of sufficient bits to represent it. This means that when working in the millions or tens of millions we can lose the fractional part of the number entirely!

This small error can be used with the equations shown in the last post on error propagation to show the cumulative error and accuracy loss as a function involving lots of floating point numbers progresses. This is an important factor to consider when dealing with long numerical functions.

Usage

So when we come to use floating point numbers in large calculations we can calculate how much error we expect to accumulate through the numbers being truncated to fit in the number of bits provided and the rounding of the numbers to the nearest representable floating point number. This error must be of an acceptable level for the function we are trying to write, otherwise we may need to turn to alternative algorithms or more precise data types to represent the data.