Floating-point representation in programming is inherently error prone as we are not able to perfectly represent real numbers without an infinite number of bits. As a result we have a standard (https://en.wikipedia.org/wiki/IEEE_754) which has some quirky behaviours. One of which is that the error in representing real numbers get larger as the number you are trying to represent moves away from 0. The scale of this error and how fast it grows depends on the number of bits we have to represent that and it is not inherently obvious when this error occurs.

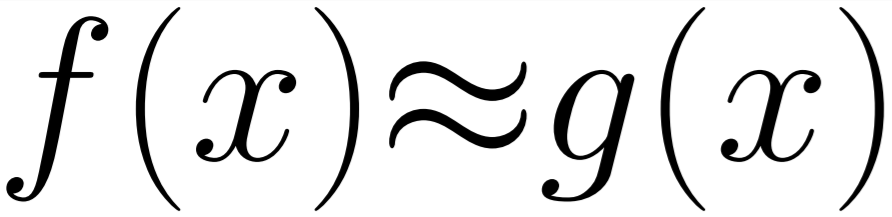

If we have perform multiple arithmetic operations then the rounding error from those calculations could be increasing. To calculate what this error is we need to be able to understand the maximum error for the given number. As a small guide, I have created a graph (below) as an easy reference.

In a later article I will go into how this can effect even simple geometric or purely numerical calculations.

For now I am going to show some code for calculating this error and then using this error to test the accuracy and understanding the actual range of results you will get from using the standard library random function generator.

In this example we want to choose a range for the random number generator, Look at the floating point representation of that range to see how many possible outcomes we could have and then test to see if we do get those outcomes and how frequently each one is hit to see how often we are getting duplicates within a range.

First step - Getting the number of values available in float in our range (between low and high).

1 2 3 4 | float nextAfter = std::nextafterf(_low, _high); double minStep = (double)nextAfter - (double)_low; double numberOfSteps = ((double)_high - (double)_low) / minStep; numberOfSteps += 1.0; |

In this example we are using std::nextafter to find the next possible floating point representation and then casting to a larger bit representation (to reduce error in this calculation) and dividing the range by this value. This will only give correct results for small ranges as the step size will change as the number gets bigger. (To calculate this perfectly for large ranges there are other less concise approaches - this will be shown in a later post).

Next, we want to create our random device and initialise the range for it to work on:

1 2 3 4 | std::random_device rd; std::mt19937 gen(rd()); std::uniform_real_distribution<float> uniformRealDistribution(_low, _high); float randomResult = uniformRealDistribution(gen); |

This code creates a device which produces random numbers in the range between '_low' and '_high'. Pretty standard stuff.

Now we want to create an std::map which holds a floating point result and a counter. This will allow us to count the occurrences of each outcomes from our random number generator and the number of times that number was output.

1 | std::map<float, int> occuranceCounter; |

The final step is simply putting this all together in a function to populate the std::map and output the results

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 | #include <map> #include <random> #include <iostream> void TestRandomDistriubtion(float _low, float _high, int sampleSize) { std::random_device rd; std::mt19937 gen(rd()); std::uniform_real_distribution<float> uniformRealDistribution(_low, _high); std::map<float, int> occuranceCounter; float nextAfter = std::nextafterf(_low, _high); double minStep = (double)nextAfter - (double)_low; double numberOfSteps = ((double)_high - (double)_low) / minStep; std::cout << "Max Possible outcomes: " << numberOfSteps + 1 << ". "; for (unsigned int i = 0; i < sampleSize; i++ ) { float randomResult = uniformRealDistribution(gen); if (occuranceCounter.find(randomResult) == occuranceCounter.end()) occuranceCounter[randomResult] = 1; else occuranceCounter[randomResult] += 1; } int size = occuranceCounter.size(); int counter = 0; for (auto& kv : occuranceCounter) { if (kv.second != 1) { counter++; } } float percent = (float)counter / (float)size; std::cout << "repeat: " << percent*100.f << "%. Total numbers is " << size << ".\n"; } |

This function will then output text like this for 10000 samples:

Max Possible outcomes: 1.04858e+06. repeat: 0.401647%. Total numbers is 9959.

And then if we write a loop we can look at the output of this for different magnitudes of input values:

Testing for values from 1 to 2 ================== Max Possible outcomes: 8388609. repeat: 0.080064051%. Total numbers is 9992. ================== Testing for values from 10 to 11 ================== Max Possible outcomes: 1048577. repeat: 0.41168794%. Total numbers is 9959. ================== Testing for values from 100 to 101 ================== Max Possible outcomes: 131073. repeat: 3.5566156%. Total numbers is 9644. ================== Testing for values from 1000 to 1001 ================== Max Possible outcomes: 16385. repeat: 27.622238%. Total numbers is 7465. ================== Testing for values from 10000 to 10001 ================== Max Possible outcomes: 1025. repeat: 100%. Total numbers is 1025. ================== Testing for values from 100000 to 100001 ================== Max Possible outcomes: 129. repeat: 100%. Total numbers is 129. ================== Testing for values from 1000000 to 1000001 ================== Max Possible outcomes: 17. repeat: 100%. Total numbers is 17. ================== Testing for values from 10000000 to 10000001 ================== Max Possible outcomes: 2. repeat: 100%. Total numbers is 2. ==================

If we look at the results we can see how the number of possible values match the graph at the start of this post. We are slowly reducing the maximum number of outcomes from our random number generator until the point where we can only get the left or right bound value when we are at 10 million. Which at such a large number is quite understandable and probably something you have ran into when working with float numbers in similar situations. However, you will notice that at even small numbers like between 1000-1001 we are quantised to only 16385 possible results. For a lot of applications that is a huge margin of error compared to the accuracy you get between zero and one.

To resolve this we can use bigger data types but that isn't always ideal. So quite a lot of papers recommend working at offsets and then rounding back up to reduce error, but this doesnt totally solve the problem. I will be doing more posts in this area as I finish my research in a related topic where I will be going over some of the coping solutions applications which don't need 100% accuracy of results frequently use.

Full source code for generating the above output:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 | #include <map> #include <random> #include <iostream> #include <iomanip> void TestRandomDistriubtion(float _low, float _high, int sampleSize) { std::random_device rd; std::mt19937 gen(rd()); std::uniform_real_distribution<float> uniformRealDistribution(_low, _high); std::map<float, int> occuranceCounter; float nextAfter = std::nextafterf(_low, _high); double minStep = (double)nextAfter - (double)_low; double numberOfSteps = ((double)_high - (double)_low) / minStep; std::cout << "Max Possible outcomes: " << numberOfSteps + 1 << ". "; for (unsigned int i = 0; i < sampleSize; i++) { float randomResult = uniformRealDistribution(gen); if (occuranceCounter.find(randomResult) == occuranceCounter.end()) occuranceCounter[randomResult] = 1; else occuranceCounter[randomResult] += 1; } int size = occuranceCounter.size(); int counter = 0; for (auto& kv : occuranceCounter) { if (kv.second != 1) { counter++; } } float percent = (float)counter / (float)size; std::cout << "repeat: " << percent*100.f << "%. Total numbers is " << size << ".\n"; } int main() { float minTestValues[] = { 1.f, 10.f, 100.f, 1000.f, 10000.f, 100000.f, 1000000.f, 10000000.f }; int arraySize = 8; int sampleCount = 10000; for (int i = 0; i < arraySize; i++) { std::cout << std::setprecision(10) << "Testing for values from " << minTestValues[i] << " to " << minTestValues[i] + 1.f << "\n==================\n" << std::setprecision(8); TestRandomDistriubtion(minTestValues[i], minTestValues[i] + 1.f, sampleCount); std::cout << "==================\n"; } return 0; } |